What is the Global AI Accelerator Chip Market Size ?

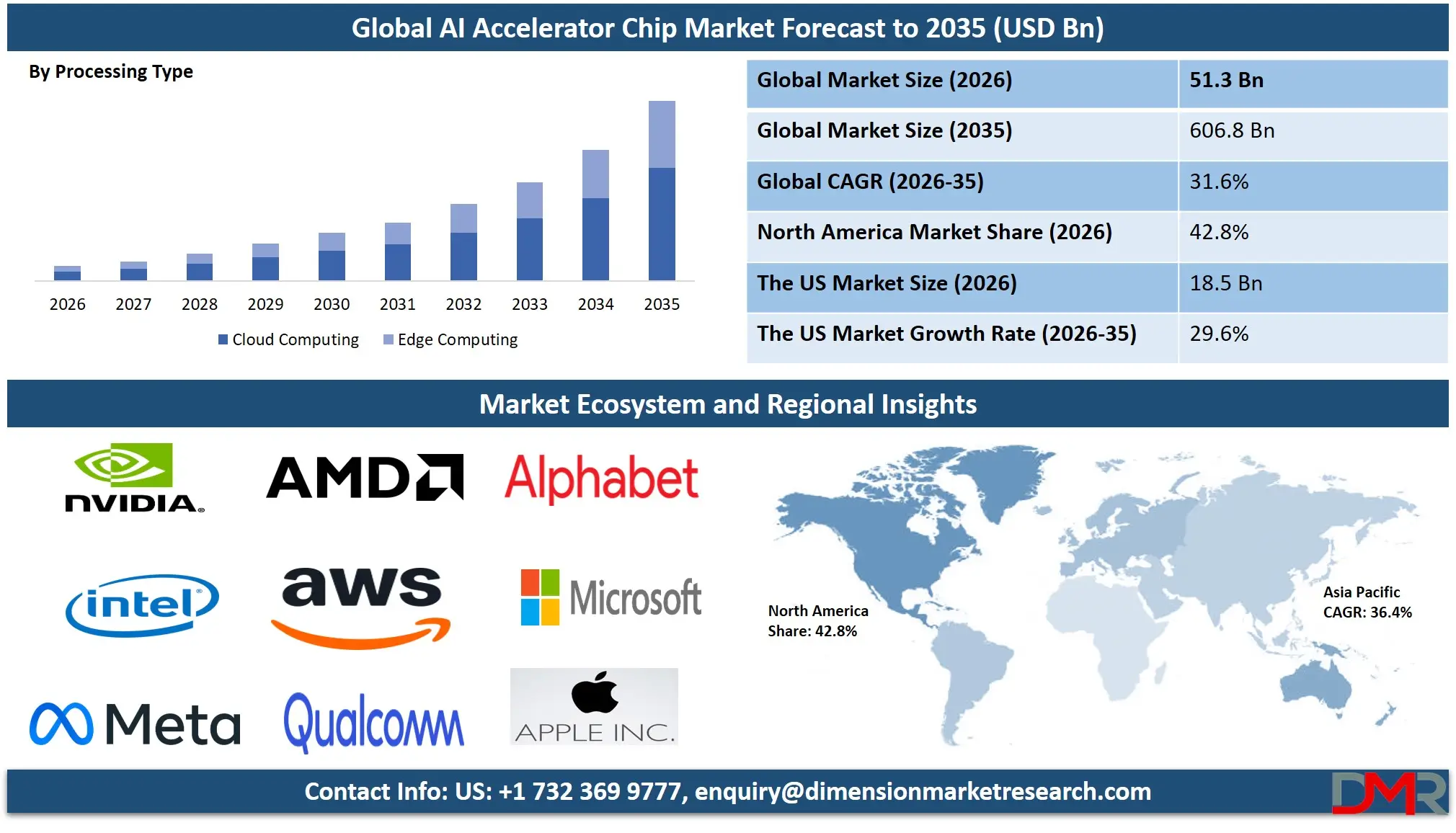

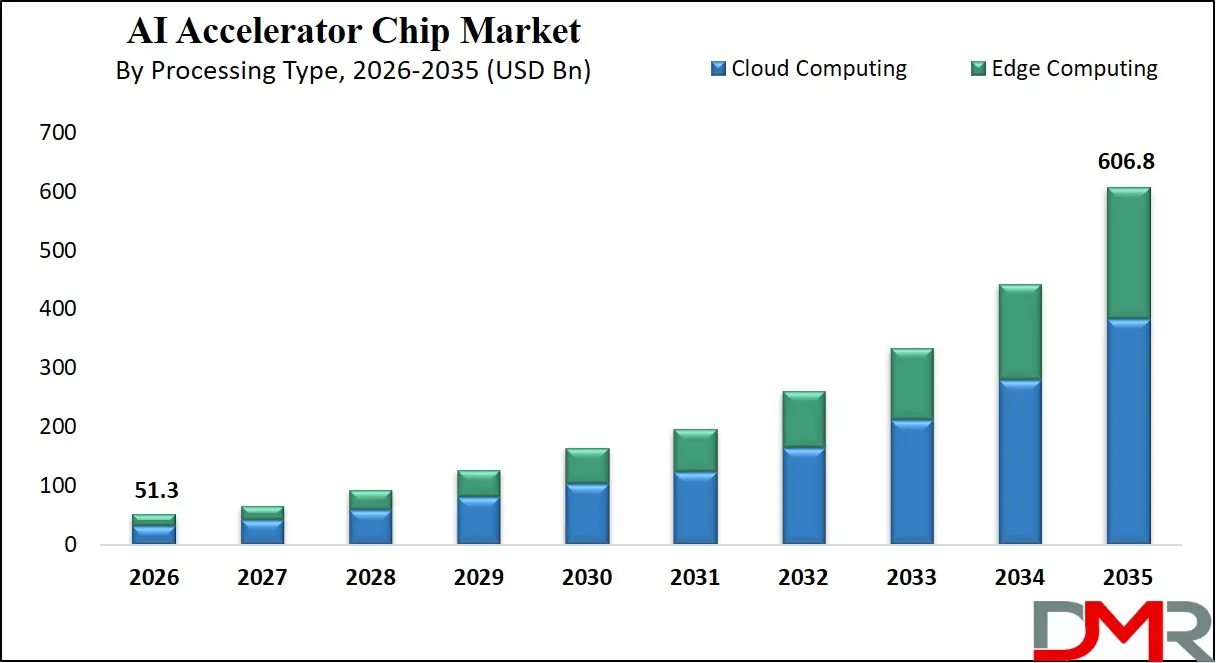

The Global AI Accelerator Chip Market size in 2026 is estimated at USD 51.3 billion and grow at a compound annual growth rate (CAGR) of 31.6% to reach a value of USD 606.8 billion by 2035, due to advancements in generative AI models, edge inference engines, chiplet-based architectures, and power-optimized tensor processing units.

ℹ

To learn more about this report –

Download Your Free Sample Report Here

The AI Accelerator Chip Market has experienced an incremental growth due to the growing deployment of large language models, national security requirements of autonomous AI capabilities, and the need to allocate both government and commercial capital to AI infrastructure across data centers and edge devices worldwide. It also covers such new technologies as real-time inference latency optimization, cloud-based AI workload orchestration, and automated model pruning used in AI chip deployment projects.

Modernization is a significant investment that cloud providers, AI startups, and defense contractors are pursuing to allow efficient memory bandwidth, reduce the risk of compute underutilization, and increase model inference speed. The move towards automation, predictive scaling of accelerator utilization, and smart workload splitting (training on GPU + inference on ASIC) is increasing adoption. Moreover, the need to operationalize national AI strategies and the importance of sustainable AI compute are driving digital changes in AI infrastructure, and AI accelerator chips have become an essential part of the future intelligent economy on a global scale.

ℹ

To learn more about this report –

Download Your Free Sample Report Here

The US AI Accelerator Chip Market

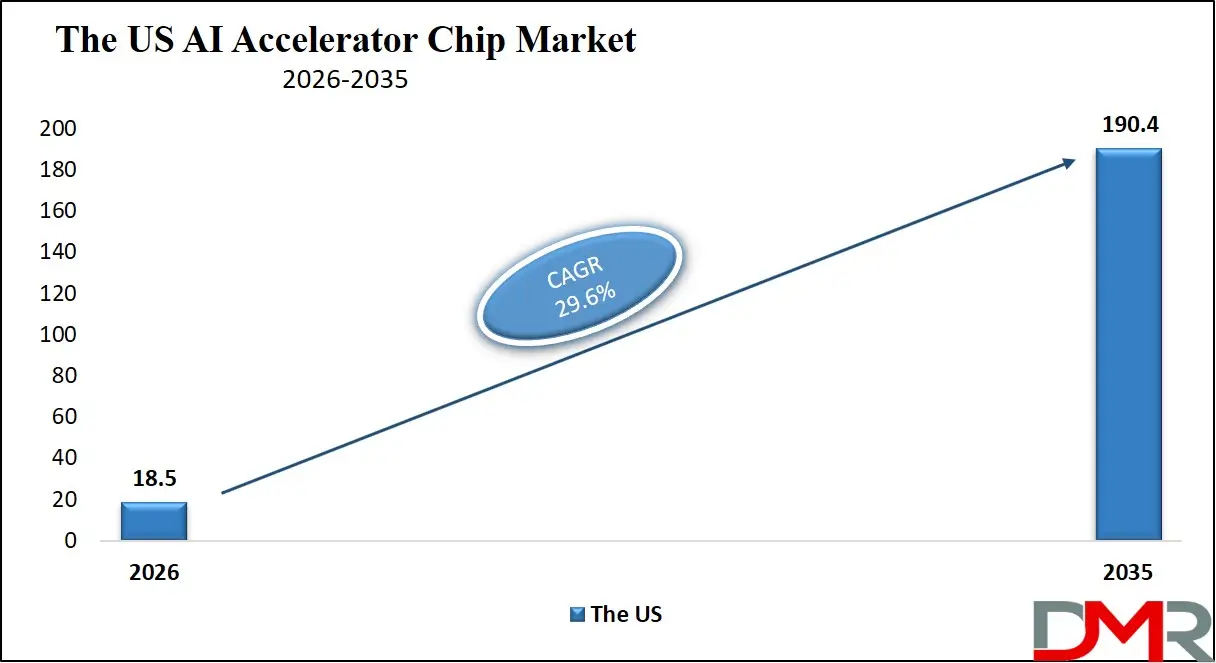

The US AI Accelerator Chip Market is estimated to grow to USD 18.5 billion in 2026 with a compound annual growth rate of 29.6% during the forecast period.

ℹ

To learn more about this report –

Download Your Free Sample Report Here

In the US, the AI accelerator chip market is motivated by commercial cloud providers (e.g., AWS, Google, Microsoft), national security (e.g., AI-enabled defense systems, NSA's compute modernization), and the necessity of general-purpose GPU reliance with more specialized, high-efficiency solutions. Increasing investment is underway in autonomous workload scheduling systems, predictive life models of accelerator wear-out using telemetry, and real-time chip thermal monitoring to detect anomalies. Federal funding programs, including the CHIPS Act and the National AI Initiative, encourage adoption. The Cloud Computing, and Edge Computing segments dominate, and digital engineering tools enhance the performance of chip design and simulation. Key players are focusing on chip reusability and supply chain partnerships to raise compute density and reliability. The regulatory frameworks that promote AI safety and verifiable model assurance also facilitate the adoption of digital chip health monitoring, and the need to have real-time thermal data and automated failover response further determines the growth of markets.

Europe AI Accelerator Chip Market

The Europe AI Accelerator Chip Market is estimated to be valued at USD 9.4 billion in 2026, witnessing growth at a CAGR of 29.1%, during the forecast period.

Europe has a mature AI accelerator chip market, and this has a significant influence on the regulatory requirements and regional policies such as the EU AI Act, the European Chips Act, and national sovereignty programs (e.g., France's Gaia-X compute infrastructure and Germany's AI research centers). Countries are also striving for smart accelerator modularization to harmonize commercial and institutional workload requirements and interoperability of the cross-border supply chain. Advanced manufacturing, like chiplet-based 3D packaging and silicon photonics, and high-reliability inference accelerators with in-built life-prediction algorithms, drives innovation. Public-private partnerships and harmonization of AI compute standards facilitate adoption. Technologies like real-time power capping and smart contract-based telemetry sharing are commonly practiced as research-centric programs, and Europe is a frontrunner in terms of the digital transformation of energy-efficient and specialized AI chips.

Japan AI Accelerator Chip Market

The Japan AI Accelerator Chip Market is projected to be valued at USD 2.1 billion in 2026, progressing at a CAGR of 33.8%, during the period spanning from 2026 to 2035.

Japan boasts a mature AI accelerator chip market supported by high-performance processor design (Fujitsu's A64FX), memory-logic integration technology, and a wide network of robotics AI innovations. Automation, precision, and mission integrity are the priorities in the country and are achieved by AI-driven thermal wear prediction models and predictive power management systems for accelerator assets. Growth is stimulated by government actions under the AI Strategy 2025 and constant investment in edge AI infrastructure. The high volume of robotics systems, autonomous vehicles, and factory automation requires efficient accelerator chips for real-time inference. The difficulties are high validation costs for new chip architectures and integration with legacy systems, yet the prospects are in exporting developed edge and cloud AI accelerator technologies to Asian and Pacific markets.

Key Takeaways

- Market Size & Forecast: The Global AI Accelerator Chip Market is estimated to be valued at USD 51.3 billion in 2026 and is expected to grow to USD 606.8 billion by 2035.

- Growth Rate & Outlook: The market is expected to witness growth at a compound annual growth rate of 31.6% in the forecast period.

- Primary Growth Drivers: Technological progress in generative AI and edge inference to support LLMs, regulatory requirements for AI safety, and commercial data center deployment are some of the key drivers of growth in the market.

- Key Market Trends: The use of AI-driven workload scheduling, real-time thermal optimization, and transition to cloud-based accelerator telemetry and fleet management systems are some of the primary market trends.

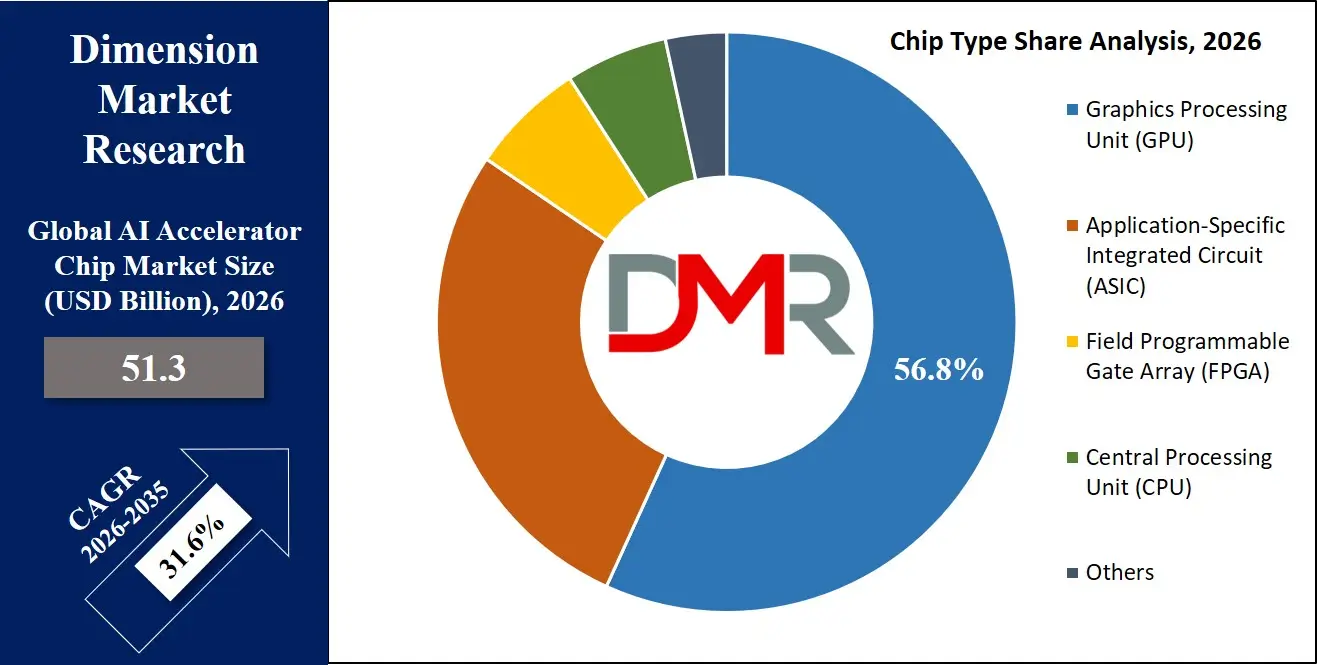

- By Chip Type: The GPU segment is anticipated to get the majority share of the AI Accelerator Chip market in 2026.

- By Processing Type: The cloud computing segment is expected to get the largest revenue share in 2026 in the AI Accelerator Chip market.

- By AI Function: The inference segment is expected to get the largest revenue share in 2026 in the AI Accelerator Chip market.

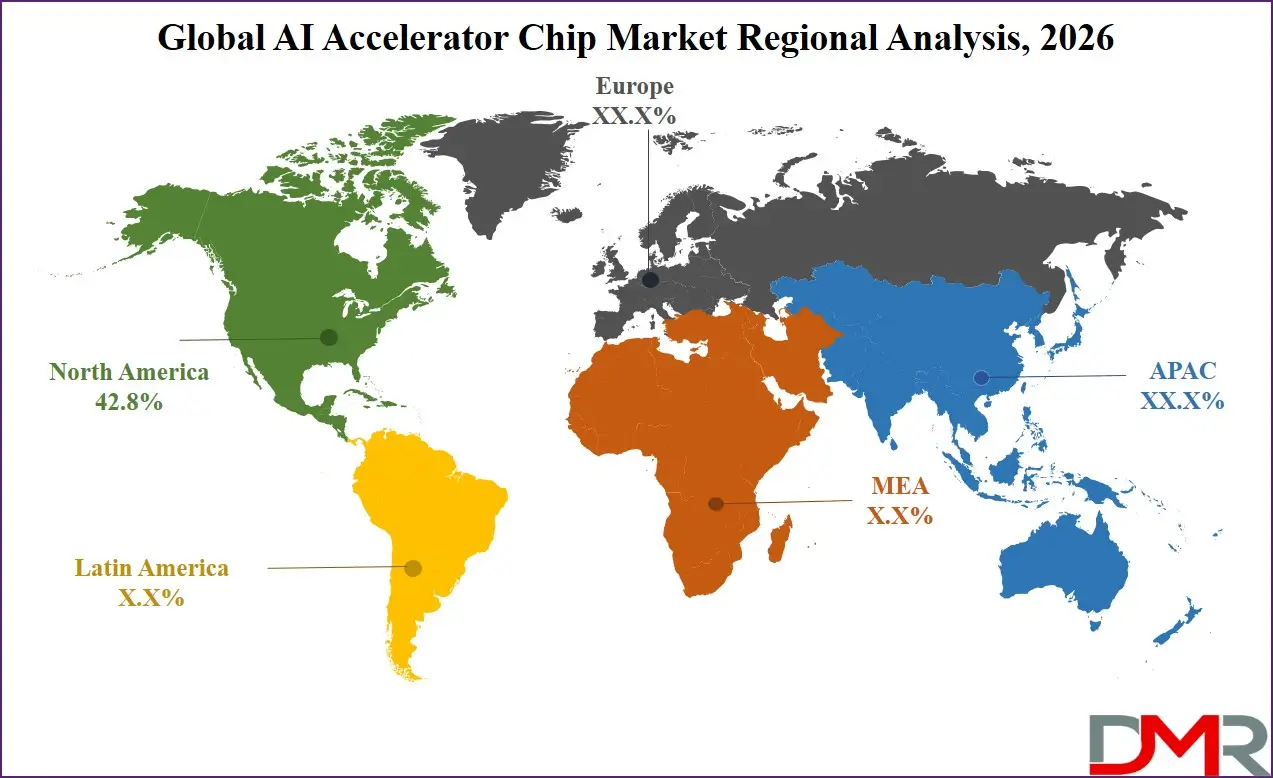

- Regional Leadership: North America is predicted to dominate the market with an estimated 42.8% share in 2026, with high cloud AI spend and defense AI investment.

What is the AI Accelerator Chip?

AI accelerator chip is a specialized processor that accelerates artificial intelligence workloads including deep learning training, real-time inference, computer vision, and natural language processing. It employs parallel compute architectures, tensor cores, systolic arrays, or neuromorphic designs to provide high throughput and efficiency. The contemporary systems have real-time telemetry, chiplet-based multi-die packaging, and AI-assisted power management to ensure transparency, efficiency, and reliability. These accelerator chips are capable of supporting efficient model deployment, sustainable AI compute operations, and help direct the funds of commercial and government and research stakeholders towards scalable, long-period AI infrastructure. They also facilitate accountability by making sure that accelerator performance data is quantified, tracked, and in line with global AI sustainability objectives.

Use Cases

- Large Language Model Deployment and Fine-Tuning: AI accelerator chips support model training, inference, and real-time token generation that reduce latency and improve throughput, regulatory compliance, and AI safety.

- Thermal & Power Life Prediction Modeling (Reliability Risk): Mission information, including cumulative compute hours or thermal cycling, is modeled to provide redundancy margins and continue operation without failure to maintain operational stability over the long term and investor trust.

- Commercial Cloud AI Services: Commercial cloud providers are employing GPU and ASIC accelerators to perform model training, fine-tuning, and inference to provide AI-as-a-service, computer vision, and conversational AI with quantifiable and proven performance.

- Edge and Government Programs: More efficient edge accelerators contribute to the success of autonomous vehicles, defense drones, and smart sensors, facilitate national AI adoption, contribute to deployment reliability, and help implement policies, such as the AI compute governance policy and energy efficiency policy.

How AI Is Transforming the Global AI Accelerator Chip Market

The artificial intelligence is transforming AI accelerator chips by having been able to model the workload behavior predictively, anomalies in the power draw data can be automatically identified, and voltage-frequency scaling can be optimized in real-time. Telemetry and environmental data can be analysed with AI algorithms to determine any degradation or performance drift and scale-optimise mission results. This saves time, is verifiable and cheaper than manual data analysis.

Moreover, AI enhances mission assurance through offering adaptive compute scheduling, anticipating thermal threats to chip components, and intelligent prioritization of accelerator health monitoring. It is also involved in reducing the cost of baseline testing and ongoing performance tracking, allowing data center operators to reduce the cost and physical footprint of on-prem test campaigns and improve the reliability of AI workloads and their financial returns.

Market Dynamics

Key Drivers of the Global AI Accelerator Chip Market

Rapid developments in Generative AI and Edge Inference

The market is being pushed by a fast uptake of AI, high-efficiency tensor processing units, chiplet-based packaging, and real-time telemetry analytics. These technologies will allow monitoring of the health of accelerators in real-time, identify performance anomalies early, predict end-of-life, and simplify the process of ground verification. Consequently, operational uptime and inference efficiency are highly enhanced as well as minimizing expenses of manual telemetry analysis. The growth of large language models like GPT and LLaMA, in particular, is also accelerating the need for intelligent accelerator chips as data center operators are more inclined towards automation and workload optimization based on data.

Growing Focus on AI Regulation and Sustainable Compute

The world is becoming more and more involved in policies of AI energy efficiency, with governments and international bodies proposing compute efficiency policies, like the EU AI Act's sustainability provisions and the US Executive Order on AI. These structures are driving a high demand for efficient accelerator chips that can be used to perform lower-power inference and training. In parallel, global initiatives such as the UN ITU AI for Good are encouraging the adoption of energy-efficient chip architectures. The increasing calls on transparency in AI compute usage and carbon footprint are also enhancing the necessity of verifiable and efficient accelerator chips in both commercial and government data centers.

Restraints in the Global AI Accelerator Chip Market

High Costs of Design and Advanced Packaging

AI accelerator chips are costly and time-consuming to validate, needing to test in thermal chambers, qualify power delivery, test long-term reliability, and sophisticated telemetry facilities. Moreover, export control laws and regulations like BIS restrictions on advanced chips further complicate and increase expenses. All these pose serious obstacles to new entrants and small AI hardware developers, tend to lengthen deployment time, and raise initial capital needs.

Limited Standardization Across Accelerator Architectures

The industry still depends on several accelerator architectures that include GPU, ASIC, FPGA, and neuromorphic chips. Nevertheless, the absence of standard software interfaces (beyond CUDA, ROCm, OpenCL) across accelerator platforms is a significant challenge. AI systems lack universal plug-and-play standards, unlike the CPU or memory industries, which complicates and makes integration very expensive. This division expands the development time and restricts the wide-ranging interoperability of AI models.

Growth Opportunities in the Global AI Accelerator Chip Market

Expansion of Emerging AI Programs

Developing AI nations such as Brazil, Indonesia, Nigeria, the UAE, and Vietnam are investing heavily in AI infrastructure and accelerator capability development. These regions present strong growth potential due to increasing demand for AI-based automation, computer vision, and NLP services. With fewer legacy system constraints, these markets provide opportunities for modern, cost-efficient accelerator technologies tailored for edge and cloud deployment.

Rising Demand for Edge AI Inference

The increased requirement of advanced accelerator chips is being generated by the growth of edge computing, autonomous systems, and real-time AI applications. These technologies play a vital role in smart cameras, industrial robots, and autonomous vehicles. With the rising importance of low latency as a major industry concern, edge inference capabilities are likely to be fundamental to future AI infrastructure.

Global AI Accelerator Chip Market Trends

AI-Driven Workload Monitoring and Predictive Analytics

Accelerator chips are being monitored and anomalies detected in real time and component wear predicted using on-chip AI. The use of digital twin models and machine learning algorithms is enhancing compute scheduling, system lifespan, and deployment reliability. This shift is transforming accelerator management from manual telemetry review to fully automated, continuously optimized system monitoring.

Cloud-Based Telemetry and Fleet Management Systems

Cloud computing and digital twin technologies are taking the centre stage in the operations of AI clusters. These platforms enable real-time storage and analysis of accelerator performance data, centralized fleet management, as well as remote monitoring of AI chip health. Cloud-based systems enhance transparency, lower on-prem infrastructure expenses, and quicker responses to workload changes across data centers, as experienced by operators of large AI fleets.

Research Scope and Analysis

By Chip Type Analysis

The GPU segment is expected to remain the largest in 2026, accounting for about 56.8% of the global AI accelerator chip market, driven by its dominant use in large-scale model training, high-throughput parallel compute, and flexibility across diverse AI frameworks where maximum compute density and software ecosystem maturity are essential.

ℹ

To learn more about this report –

Download Your Free Sample Report Here

Meanwhile, the ASIC segment is witnessing strong growth, driven by rising demand for power-efficient inference in edge devices, data center fine-tuning, and custom workloads where energy efficiency is critical. Adoption is further supported by AI-based power capping, real-time efficiency diagnostics, and modular chiplet configurations that integrate multiple accelerator types for improved workload flexibility and endurance.

By Processing Type Analysis

The cloud computing segment is expected to dominate with approximately 64.3% market share in the year 2026, owing to its critical role in hosting large model training, high-volume inference, and centralized AI operations. Commercial and government buyers are shifting to higher-performance accelerator designs in order to have greater throughput and improve energy efficiency. Cloud accelerator solutions are adaptable, making it easy to deploy and integrate with other infrastructure. Custom chips can still be used, though, in places where deterministic low latency is required (e.g., autonomous vehicles, industrial robots). The multi-processing usage with cloud and edge accelerators simultaneously has the quickest development, and the accelerator portfolios are more flexible to different latency classes and workload contexts.

By AI Function Analysis

It is predicted that the inference segment will have the highest share of around 58.2% in 2026, considering its pivotal role in hosting ASIC and GPU accelerators for real-time model serving, content generation, and anomaly detection. The integrated telemetry and control systems provide continuous throughput tracking, which improves the efficiency and reliability of AI operations. Model size and inference frequency are determined by the application type, and the most common are NLP models with transformer accelerators. The training segment controls high-throughput, long-duration compute, such as foundation model pre-training. The fastest growing area is the others (e.g., neuromorphic) segment, which enhances the power to automate spiking neural networks and standardize accelerator interfaces by means of modular compute arrays and dynamic reconfiguration. The fusion of AI function integration and workload scheduling is generating smarter AI infrastructure markets, which results in innovation and expands the technological market.

By Application Analysis

The Natural Language Processing (NLP) segment is likely to take the lead with an estimated share of 35.8% in 2026, driven by strong adoption in chatbots, search engines, and enterprise AI deployments. However, the broader NLP and Computer Vision categories together account for the dominant accelerator demand base due to high inference volumes. Robotics continues to require robust accelerator chips for real-time sensor fusion and path planning. The Network Security segment supports lightweight AI models for packet inspection and threat detection. The fastest-growing category is the Computer Vision segment, driven by autonomous vehicle requirements and increasing smart city deployments.

By Industry Vertical Analysis

The IT & Telecom segment represents the largest industry vertical in 2026, accounting for approximately 28.7% share of the market, driven by rising autonomous driving deployments, sensor fusion requirements, and real-time inference regulations. Consumer Electronics forms the second-largest segment, utilizing AI accelerators for smartphones, smart speakers, and wearables. The Automotive sector is a key high-growth segment, fueled by increasing adoption of autonomous driving technologies, advanced driver-assistance systems (ADAS), and real-time sensor processing requirements. The fastest-growing area is the Telecom segment, driven by the need to have low-latency, high-reliability accelerators for RAN optimization and network edge AI. With increasing AI adoption across industries, commercial, defense, and research agencies have been spending more on purpose-built accelerator chips to improve inference success rates and AI operations efficiency.

The Global AI Accelerator Chip Market Report is segmented based on the following:

By Chip Type

- Graphics Processing Unit (GPU)

- Application-Specific Integrated Circuit (ASIC)

- Field Programmable Gate Array (FPGA)

- Central Processing Unit (CPU)

- Others

By Processing Type

- Cloud Computing

- Edge Computing

By AI Function

- Inference

- Training

- Others

By Application

- Natural Language Processing (NLP)

- Computer Vision

- Robotics

- Network Security

By Industry Vertical

- Automotive

- Consumer Electronics

- Healthcare

- Manufacturing

- Financial Services

- Telecom

- Others

Regional Analysis

Leading Region in the AI Accelerator Chip Market

It is projected that North America will take the lead in the global AI accelerator chip market (by value), covering a market share of about 42.8% in the year 2026. The region's dominance is driven by strong cloud AI workload cadence (US-based hyperscalers), high accelerator chip prices relative to other regions, a mature supply chain for advanced packaging and high-bandwidth memory, and the presence of key chip designers and component suppliers. The widespread adoption of advanced GPU and ASIC accelerators for LLM deployment, defense AI missions, and government AI programs further strengthens North America's leading position in the market. Additionally, continuous investments in AI-enabled chip health monitoring and chiplet manufacturing capabilities are further reinforcing regional technological leadership.

ℹ

To learn more about this report –

Download Your Free Sample Report Here

Fastest-Growing Region in the AI Accelerator Chip Market

Asia-Pacific is the fastest-growing region, supported by strong AI deployment targets (China, India, Japan), increasing semiconductor sovereignty initiatives, rising investments in domestic AI chip capabilities, and growing adoption of edge inference systems. The region benefits from well-established manufacturing capacity, increasing commercial participation, and alignment with national AI roadmaps. Countries across the region are actively deploying AI accelerator chips to enhance inference efficiency and strengthen digital infrastructure. Growing emphasis on AI R&D and structured chip development further accelerates market expansion in the region. Moreover, increasing government support and commercial AI commitments are expected to sustain high growth momentum.

By Region

North America

Europe

- Germany

- The U.K.

- France

- Italy

- Russia

- Spain

- Benelux

- Nordic

- Rest of Europe

Asia-Pacific

- China

- Japan

- South Korea

- India

- ANZ

- ASEAN

- Rest of Asia-Pacific

Latin America

- Brazil

- Mexico

- Argentina

- Colombia

- Rest of Latin America

Middle East & Africa

- Saudi Arabia

- UAE

- South Africa

- Israel

- Egypt

- Rest of MEA

Competitive Landscape

The AI accelerator chip market is very competitive, with innovation and strategic alliances being the order of the day. In order to achieve a competitive advantage, companies and research labs are oriented towards the creation of new advanced chip architectures (e.g., neuromorphic, analog compute, in-memory processing), AI-powered chip telemetry, and digital twin-enabled health monitoring platforms. There are high barriers to entry because of capital-intensive fabrication infrastructure, technical chip design know-how, and the need for software ecosystem maturity and supply chain certifications.

Strategic approaches in the market to increase market presence include partnerships with cloud providers, mergers between chip designers and system integrators, and long-term accelerator supply contracts with data center operators. Moreover, research and development in advanced packaging and high-bandwidth memory are important factors in staying competitive and meeting the changing needs of the AI industry.

Some of the prominent players in the Global AI Accelerator Chip Market are:

- NVIDIA Corporation

- Advanced Micro Devices, Inc.

- Intel Corporation

- Alphabet Inc. (Google)

- Amazon.com, Inc. (Amazon Web Services)

- Microsoft Corporation

- Meta Platforms, Inc.

- Apple Inc.

- Qualcomm Incorporated

- Huawei Technologies Co., Ltd.

- Alibaba Group Holding Limited

- Broadcom Inc.

- Marvell Technology, Inc.

- Cerebras Systems, Inc.

- Groq, Inc.

- Graphcore Limited

- SambaNova Systems, Inc.

- Tenstorrent Inc.

- Cambricon Technologies Corporation Limited

- Enflame Technology Co., Ltd.

- Mythic Inc.

- Untether AI Corporation

- Other Key Players

Recent Developments

- April 2026: Meta Platforms, Inc. expanded its long-term partnership with Broadcom Inc. to develop multiple generations of custom AI accelerator chips (MTIA platform), targeting multi-gigawatt AI compute deployment across its data centers, strengthening its in-house AI silicon roadmap through 2029.

- April 2026: Broadcom Inc. announced an extended AI silicon supply and co-development agreement with Meta Platforms, Inc., including next-generation MTIA chips, advanced networking silicon, and large-scale AI infrastructure deployment exceeding 1 gigawatt capacity.

- April 2026: NVIDIA Corporation reportedly entered a strategic technology licensing agreement with Groq, Inc., aimed at strengthening NVIDIA's AI inference capabilities while expanding access to Groq's ultra-low latency Language Processing Unit (LPU) architecture.

- October 2025: Qualcomm Incorporated announced its entry into the data center AI accelerator market with the AI200 and AI250 chips, designed for high-efficiency inference workloads, marking a major shift from its traditional mobile-focused semiconductor business.

Report Details

| Report Characteristics |

| Market Size (2026) |

USD 51.3 Bn |

| Forecast Value (2035) |

USD 606.8 Bn |

| CAGR (2026–2035) |

31.6% |

| The US Market Size (2026) |

USD 18.5 Bn |

| Historical Period |

2021 – 2025 |

| Forecast Period |

2027 – 2035 |

| Base Year |

2025 |

| Estimated Year |

2026 |

| Segments Covered |

By Chip Type (Graphics Processing Unit (GPU), Application-Specific Integrated Circuit (ASIC), Field Programmable Gate Array (FPGA), Central Processing Unit (CPU), Others), By Processing Type (Cloud Computing, Edge Computing), By AI Function (Inference, Training, Others), By Application (Natural Language Processing (NLP), Computer Vision, Robotics, Network Security), By Industry Vertical (Automotive, Consumer Electronics, Healthcare, Manufacturing, Financial Services, Telecom, Others) |

| Regional Coverage |

North America – The US and Canada; Europe – Germany, The UK, France, Russia, Spain, Italy, Benelux, Nordic, & Rest of Europe; Asia-Pacific – China, Japan, South Korea, India, ANZ, ASEAN, Rest of APAC; Latin America – Brazil, Mexico, Argentina, Colombia, Rest of Latin America; Middle East & Africa – Saudi Arabia, UAE, South Africa, Turkey, Egypt, Israel, & Rest of MEA |

Frequently Asked Questions

How big is the Global AI Accelerator Chip Market?

▾ The Global AI Accelerator Chip Market size is estimated to have a value of USD 51.3 billion in 2026 and is expected to reach USD 606.8 billion by the end of 2035.

What is the CAGR of the Global AI Accelerator Chip Market from 2026 to 2035?

▾ The market is growing at a CAGR of 31.6% over the forecasted period.

What factors are driving the growth of the Global AI Accelerator Chip Market?

▾ Technological advancements in generative AI and edge inference, regulatory mandates for AI energy efficiency, and government funding for national AI compute infrastructure are the factors driving the growth of the AI accelerator chip market, globally.

What are the major trends in the Global AI Accelerator Chip Market?

▾ Adoption of AI-driven workload scheduling and real-time thermal monitoring, and a shift toward cloud-based accelerator telemetry and fleet management platforms are the major trends in the market.

Which region held the largest share of the Global AI Accelerator Chip Market in 2026?

▾ North America is expected to account for the largest market share in 2026, with a share of about 42.8%.

Which region is expected to grow the fastest in the Global AI Accelerator Chip Market?

▾ Asia Pacific is the fastest-growing region in the market during the forecast period.

Who are the key players in the Global AI Accelerator Chip Market?

▾ Some of the major key players in the Global AI Accelerator Chip Market are NVIDIA Corporation, Advanced Micro Devices, Inc., Intel Corporation, Amazon.com, Inc. (Amazon Web Services), Microsoft Corporation and Meta Platforms, Inc., and many others.

How is the Global AI Accelerator Chip Market segmented?

▾ The market is segmented by chip type, processing type, AI function, application, and industry vertical.