What is the DeepFake AI Market Size?

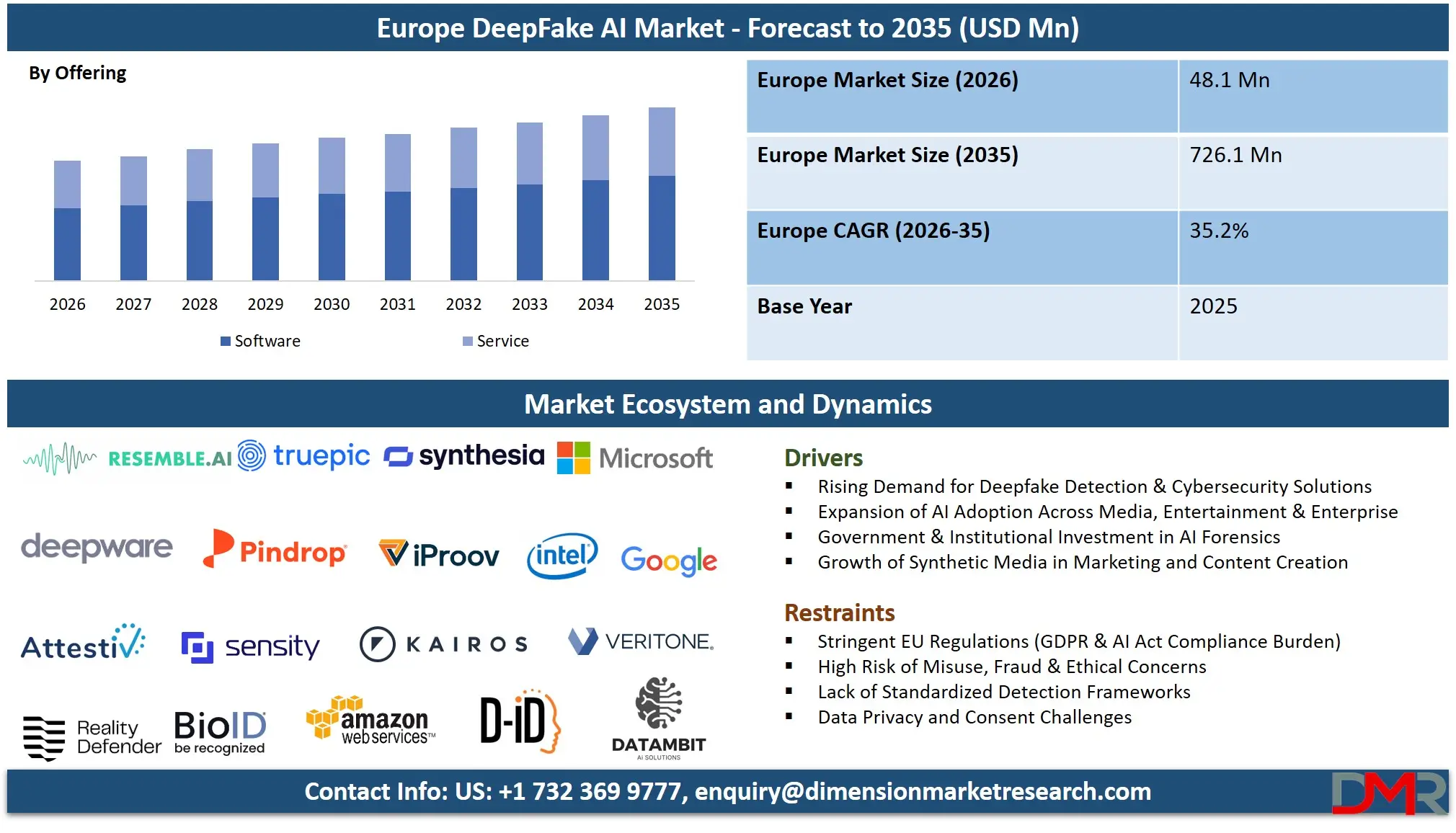

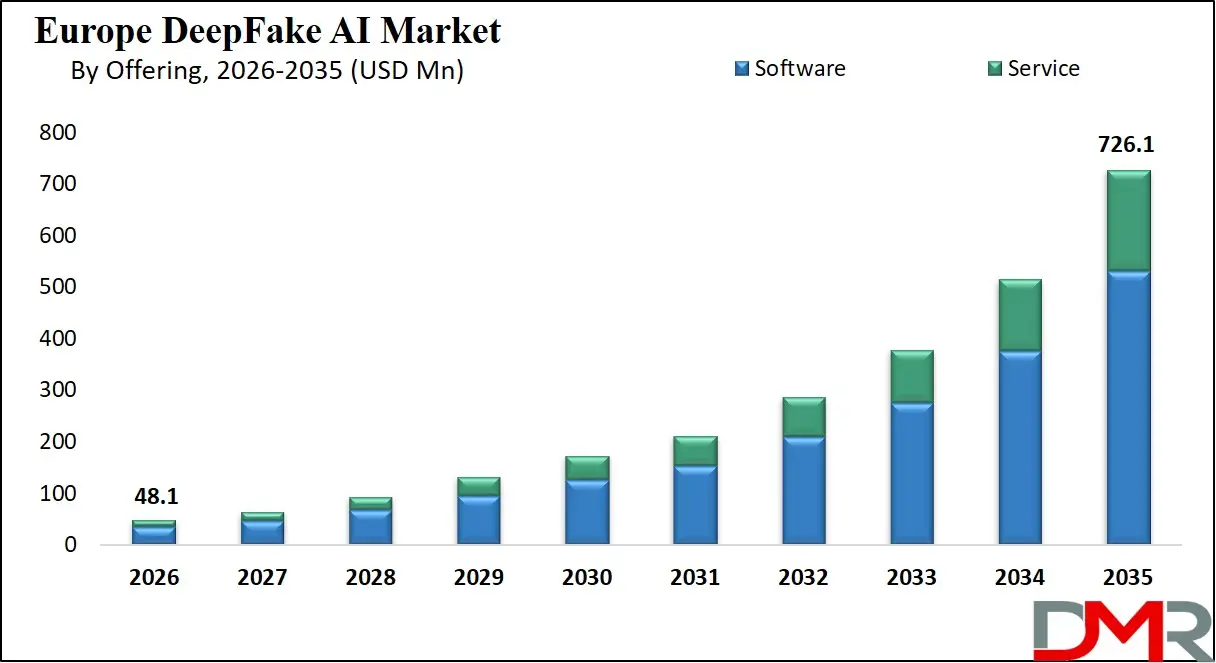

The Europe DeepFake AI market is expected to reach a value of USD 48.1 million in 2026, and it is further anticipated to reach USD 726.1 million by 2035, growing at a CAGR of 35.2% during the forecast period.

ℹ

To learn more about this report –

Download Your Free Sample Report Here

The DeepFake AI market includes creating, implementing, and using artificial intelligence (AI) based on Generative Adversarial Networks (GANs) and autoencoders to generate or detect hyper-realistic synthetic media. Due to the increasing refinement of generative AI, the growing risk of misinformation and digital fraud, and the subsequent demand for solid detection and media authentication and forensic analysis tools in European businesses and government agencies, the market is growing quickly.

In Western Europe, such as London, Berlin, Paris and the Nordic tech corridors, technology hubs are emerging as the birthplace of synthetic media tools as well as the innovation of sophisticated cybersecurity countermeasures. Such an ecosystem consists of a combination of AI research laboratories, software vendors specializing in detection algorithms, professional service firms offering ethical advice and digital forensics, and managed security service providers (MSSPs) that include deepfake defense as part of a broader cyber-resilience model. These solutions are being given more and more priority by European organizations not just to protect brand reputation and to counter financial fraud, but also to ensure that there is strict adherence to changing EU regulations regarding AI transparency, data protection (GDPR) and the impending AI Act requirements regarding synthetic content labeling.

ℹ

To learn more about this report –

Download Your Free Sample Report Here

Key Takeaways

- Market Size & Forecast: The Europe DeepFake AI market is projected to reach USD 48.1 million by 2026 and expand to USD 726.1 million by 2035, fueled by the dual drivers of generative AI proliferation and stringent European digital identity verification requirements.

- Growth Rate & Outlook: The market is expected grow at a CAGR of 35.2% due to increasing risks of deepfake-based fraud in the BFSI industry, state-sponsored disinformation attacks on European elections, and mass adoption of biometric verification systems by enterprises.

- Primary Growth Drivers: This market will grow due to the increased amount of AI-generated disinformation on social media platforms, the increased regulatory pressure on synthetic media disclosure under the EU AI Act, and the increased requirement of digital evidence authentication in the legal and law enforcement systems in Europe.

- Key Market Trends: The integration of real-time deepfake detection into video conferencing and call center security, tracking of immutable media provenance using blockchain, the development of specific forensic software to be legally admissible, and a transition to managed detection and response services against deepfake threats are all noteworthy market trends.

- By Offering Type Analysis: Software solutions are projected to dominate the offering segment in this market with highest market share. This dominance is driven by the requirement of automated and scalable systems able to consume massive volumes of video and audio material between European news agencies, social media moderation teams, and KYC (Know Your Customer) procedures in financial institutions.

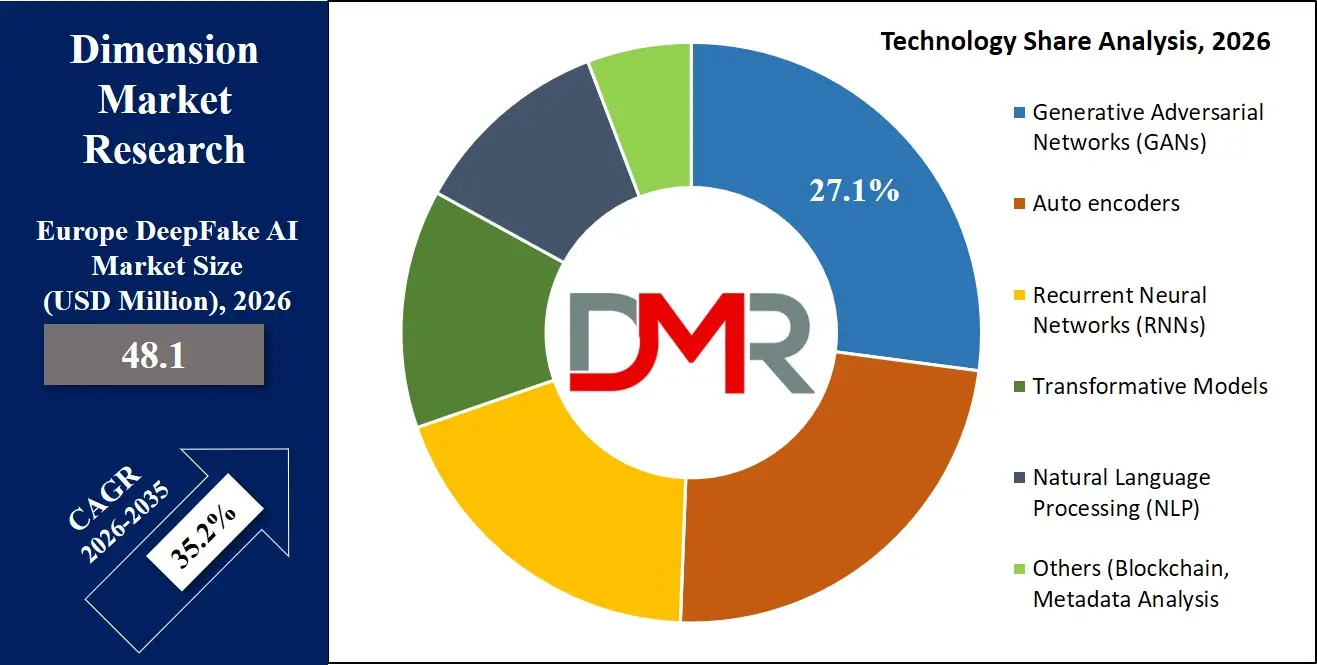

- By Technology Analysis: Generative Adversarial Networks (GANs) are poised to dominate this segment due to their underlying technology powering both the supply and detection sides of the market. Technologies to detect GAN-generated content are rapidly developing to follow the realism of the content, with NLP and forensic analysis becoming fundamental in detecting synthetic audio and manipulated text.

- By End User Analysis: BFSI sector is projected to be the largest adopters of this market into this market. It is because BFSI institutions are spending a lot of money on anti-fraud detection to stop attacks through deepfakes-based identity theft and social engineering.

What is the DeepFake AI?

DeepFake AI DeepFake AI is the use of advanced artificial intelligence and deep learning methods, especially Generative Adversarial Networks (GANs) and autoencoders, to generate or modify video, audio, and image content in a highly realistic manner. In Europe, the term includes the generative (creation of synthetic media to be used as entertainment, marketing, and training) and the defensive (detection algorithms, forensics, and media provenance applications developed to recognize and curb manipulated content).

The DeepFake AI market in Europe, unlike the more general applications of generative AI, is singular because it is influenced by the tension between innovation in content generation and the need to be trusted and transparent. It is a two-sided technology landscape in which the same neural network architecture that can be used to de-age an actor in a French movie is also deployed to make fake video calls to German bank clients. As a result, the market is characterized by a technological arms race between more advanced generative models and the tools used to unearth their digital artifacts that are in the hands of forensics.

Use Cases

- Fraud Detection & Biometric Verification: European banks and fintech organizations use deepfake detection models to safeguard remote identity verification procedures (KYC) against presentation attacks, where an artificial video or audio is impersonated to act as a legitimate customer during onboarding or high-value transactions.

- Government & National Security: The European electoral commissions and government agencies use the software of forensic analysis and the tools of media authentication to discover and signal AI-generated disinformation campaigns that are aimed at affecting the opinion of people or disrupt the democratic processes during election campaigns.

- Content Creation & Verification: European broadcasters and film studios use GANs and transformative models to do the legitimate work of creative creativity, including dubbing localization, de-aging actors, and creating CGI characters. At the same time, news agencies use detection software to ensure that they only show user-generated material that is authentic.

- Digital Evidence Authentication: European law firms and forensic investigators are embracing deepfake detection technology to question the authenticity of digital evidence presented in court. This involves examination of audio recordings, surveillance video and digital images to confirm that they have not been tampered with and can legally be used.

- Call Center Security: Telecommunication operators and corporate contact centers combine real-time NLP and audio deepfake detection to vishing (voice phishing) attacks and voice fraud generated by AI to ensure that customers are not the victims of social engineering attacks that bypass conventional security protocols.

Market Dynamics

Key Drivers of the Europe DeepFake AI Market

Regulatory Compliance Mandates

The adoption of the EU AI Act and Digital Services Act requires European businesses to label and identify synthetic media. Violation can lead to huge fines, and the use of detection programs and media validation tools is rushed. Financial institutions, media, and social networks are all now expected to show how they operate to curb deepfake-enabled fraud and disinformation. Such regulatory pressure converts deepfake defense into a voluntary cybersecurity policy to a mandatory operational policy to remain in the market and gain consumer trust in the European digital single market.

Sophistication of Financial Fraud

There is an unparalleled surge of identity theft through deepfakes against European financial institutions and high value transactions, including KYC processes. Generative AI is used by criminals to circumvent biometric checks by making synthetic video and voice clones. The growing threat vector compromises the existing fraud detection models and forces banks and financial technology providers based in London, Frankfurt and Paris to spend a lot of money on sophisticated liveness detection and forensic analysis software to safeguard customer funds and ensure the reputation of their institutions.

Market Challenges / Restraints in the Europe DeepFake AI Market

GDPR Compliance Tension

Deepfake detection frequently requires examining biometric indicators and face structure, which puts it in direct conflict with the stringent restrictions of GDPR on the processing of sensitive personal data. The European vendors have to design mechanisms to identify manipulation without preserving or utilizing identifiable biometric signatures. To overcome this legal uncertainty, much more expensive privacy-protecting AI methods, including on-device processing and federated learning, are needed, making it more difficult to deploy and potentially lowering the detection accuracy compared to other jurisdictions that are not regulated by European laws.

Adversarial AI Evolution

Detection technology is always reactive and is constantly pursuing the ever-increasing sophistication of generative models. With progress in the development of transformer architectures and GANs by European research labs, the digital artifacts on which detection software is based weaken. Such a technological arms race presents unsustainable research and development expenditures on software providers and leaves the software industry with a window of vulnerability, wherein emerging deepfake methods are beyond the capabilities of current forensics tools, and which casts doubt on the reliability of digital evidence in the legal system and media in Europe.

Growth Opportunities in Europe DeepFake AI Market

Sovereign Media Provenance Infrastructure

Trusted cloud and sovereign technology stacks are priorities of European public broadcasters and government agencies. This presents a profitable market opportunity to European-based suppliers of blockchain-based media authentication and cryptographic provenance tracking. Chain of custody solutions to create unbreakable chains of custody over digital content are perfectly aligned with the EU values of transparency and data integrity, and will place specialized European startups in a position to win major contracts in the public sector and state-media procurement over non-European hyperscalers.

Managed Deepfake Detection Services

European mid-market companies do not have the in-house experience to address the changing deepfake threat environment. This has provided a significant opportunity to grow by Managed Security Service Providers (MSSPs) to provide sustained monitoring, real-time alerting, and incident response to synthetic media attacks. By further integrating deepfake protection into more comprehensive cyber-resilience offerings, providers can tap into the niche customer segments of law firms, regional banks, and company communications departments that need end-to-end protection against reputational and financial losses.

Trends in the Europe DeepFake AI Market

Audio-First Security Emphasis

Although visual deepfakes are more likely to be featured in the news, audio detection by NLP technologies is taking precedence in European financial and telecommunications industries. The rise of vishing and AI-cloned executive voices in social engineering frauds has caused an investment focus to be on call center security and voice fraud prevention. This tendency is characteristic of the peculiar susceptibility of the European banking and customer service systems based on the use of phones, which leads to the demand to have real-time vocal biometric analysis identifying synthetic speech patterns in live conversations.

Pre-Capture Content Credentials Adoption

The European market is shifting toward preventative provenance rather than post-hoc detection. C2PA standards and cryptographic watermarking at the time of media creation are being embraced by leading camera manufacturers and news agencies. This verify, don't detect model, which is highly promoted by EU policy frameworks, incorporates tamper-evident metadata directly into files, allowing downstream platforms and newsrooms to immediately confirm authenticity without the need to use fallible algorithmic detection of manipulated information alone.

Research Scope and Analysis

By Offering

Software solutions are projected to dominate the offering segment in the Europe DeepFake AI market. This encompasses DeepFake Detection Algorithms deployed by social media platforms for automated content moderation, Media Authentication Tools utilized by European news agencies and broadcasters for source verification, and Forensic Analysis Software employed by law enforcement and legal professionals examining digital evidence integrity. The dominance of software stems from the imperative for scalable, high-volume automated analysis that far exceeds human moderation capabilities alone. Concurrently, Professional and Managed Services are anticipated to experience accelerated growth as European organizations increasingly seek specialized external expertise for incident response readiness, navigating complex EU AI Act compliance obligations, and establishing continuous monitoring frameworks against evolving deepfake threats.

By Technology

Generative Adversarial Networks and Transformative Models constitute the foundational technologies underpinning the market, yet their application remains distinctly bifurcated between creation and defense. On the defensive front, the market relies critically upon the detection of GAN-specific visual artifacts and inconsistencies imperceptible to human observers. Furthermore, Natural Language Processing has emerged as an indispensable capability for analyzing synthetic audio manipulation and AI-generated text-based disinformation campaigns targeting European audiences.

ℹ

To learn more about this report –

Download Your Free Sample Report Here

A rapidly expanding niche involves Blockchain and Metadata Analysis methodologies, which offer a "verify, not detect" paradigm by establishing cryptographically secure, tamper-proof records of a media file's provenance and complete edit history. This approach resonates strongly with European legal institutions and journalism sectors prioritizing evidentiary integrity and source transparency.

By End User

The BFSI sector represents the largest end-user segment for defensive deepfake AI applications within the European market, driven primarily by intensifying requirements for robust Customer Verification and Authentication protocols during remote digital onboarding. Financial institutions face mounting pressure to fortify Anti-Money Laundering controls and Fraud Detection mechanisms against sophisticated synthetic identity attacks leveraging AI-generated video and voice cloning. European banks and fintech companies are investing substantially in liveness detection and biometric anti-spoofing technologies to safeguard high-value transactions and maintain regulatory compliance. Beyond BFSI, significant demand emanates from Media and Entertainment organizations requiring content provenance tools, Government agencies countering election disinformation, and Legal professionals seeking reliable Digital Evidence Authentication solutions admissible in European court proceedings.

Europe DeepFake AI Market Report is segmented based on the following:

By Offering

- Software

- DeepFake Detection Algorithms

- Media Authentication Tools

- Forensic Analysis Software

- Service

- Professional Services

- Managed Services

By Technology

- Generative Adversarial Networks (GANs)

- Autoencoders

- Recurrent Neural Networks (RNNs)

- Transformative Models

- Natural Language Processing (NLP)

- Others (Blockchain, Metadata Analysis)

By End User

- BFSI

- Customer Verification & Authentication

- Anti-Money Laundering & Fraud Detection

- Telecom

- Call Center Security

- Fraud Detection

- Government

- Election Campaigns

- National Security

- Government Communications

- Content Verification & Moderation

- Ethical Hacking & Digital Security

- Enforcement Agencies

- Healthcare

- Medical Training & Simulation

- Patient Case Simulations

- Telemedicine & Virtual Healthcare

- Legal

- Digital Evidence Authentication

- Intellectual Property Protection

- Legal & Ethical Consultation

- Media & Entertainment

- CGI Character Creation

- De-aging Actors

- Special Effects & Visual Enhancements

- Digital Content Creation

- Celebrity & Influencer Marketing

- News Agencies

- Social Media

- Retail & E-commerce

- Customer Service & Personalization

- Visual Merchandising

- Security & Fraud Prevention

- Others

Competitive Landscape

The Europe DeepFake AI market has a competitive environment divided into global technology leaders and European cybersecurity and AI ethics specialists. Large cloud providers and security software makers are adding deepfake detection features to their already existing enterprise security suites, with the aim of using long-standing customer relationships and vast distribution channels. Nevertheless, the European market is a distinctly fertile ground of specialized actors that provide solutions in a sovereign manner.

ℹ

To learn more about this report –

Download Your Free Sample Report Here

These niche vendors will stand out by GDPR-conformant forensic practices, open detection algorithms, and blockchain-based media provenance systems that are specific to EU regulatory standards. There is a high inclination towards reliable local services by European government agencies and the TV media in the process of acquiring sensitive authentication infrastructure. This regulatory-based fragmentation provides sustainable chances to regional innovators as well as prevailing international players.

Some of the prominent players in the DeepFake AI Market are:

- Microsoft

- Amazon Web Services

- Google

- Intel Corporation

- Veritone

- Synthesia

- D-ID

- Datambit

- Kairos AR

- Pindrop

- Truepic

- iProov

- BioID

- Sensity AI

- Reality Defender

- Attestiv

- Deepware

- Resemble AI

- Reface AI

- Paravision

- Other Key Players

Recent Developments

- March 2026: Multiverse Computing was awarded as one of the most successful AI innovations in Europe, with the TechTour Growth Europe Award for developing AI-based solutions, including cybersecurity and synthetic media applications, to solidify its role in the deepfake detection and responsible AI innovation ecosystem.

- February 2026: Microsoft collaborated with the UK government and research organisations to create superior deepfake detection algorithms, which would assist law enforcement agencies and boost societal safety by enhancing AI forensics, detection rates, and consistent assessment frameworks throughout the regulatory landscape in Europe.

- January 2026: X (formerly Twitter) and AI system Grok created by xAI were under investigation by European regulators due to its deepfake content generation practices and Digital Services Act compliance, underscoring growing concern over AI-based platforms functioning in Europe.

Report Details

| Report Characteristics |

| Market Size (2026) |

USD 48.1 Mn |

| Forecast Value (2035) |

USD 726.1 Mn |

| CAGR (2026–2035) |

35.2% |

| Historical Period |

2021 – 2025 |

| Forecast Period |

2027 – 2035 |

| Base Year |

2025 |

| Estimate Year |

2026 |

| Segments Covered |

By Offering (Software and Service), By Technology (Generative Adversarial Networks (GANs), Auto encoders, Recurrent Neural Networks (RNNs), Transformative Models, Natural Language Processing (NLP), and Others (Blockchain, Metadata Analysis)), By End User (BFSI, Telecom, Government, Healthcare, Legal, Media & Entertainment, Retail & E-commerce, and Others) |

| Regional Coverage |

Europe – Germany, UK, France, Russia, Spain, Italy, Benelux, Nordic, & Rest of Europe |

Frequently Asked Questions

How big is the Europe DeepFake AI Market?

▾ The Europe DeepFake AI market is valued at USD 48.1 million in 2026 and is projected to reach USD 726.1 million by 2035, reflecting sustained and exponential expansion driven by escalating synthetic media threats and stringent regulatory compliance mandates.

What is the CAGR of the Europe DeepFake AI Market from 2026 to 2035?

▾ The Europe DeepFake AI market is expected to grow at a CAGR of 35.2 percent from 2026 to 2035, indicating robust long-term growth fueled by accelerating adoption of detection software, media authentication tools, and forensic analysis services across European enterprises and government agencies.

What factors are driving the growth of the Europe DeepFake AI Market?

▾ Escalating deepfake-enabled financial fraud targeting European banks, mandatory synthetic media transparency requirements under the EU AI Act, proliferation of AI-generated election disinformation, rising demand for digital evidence authentication in legal proceedings are primary factors driving market growth.

What are the major trends in the Europe DeepFake AI Market?

▾ Adoption of pre-capture content provenance standards like C2PA and emergence of specialized managed detection services, and increasing emphasis on privacy-preserving forensic methodologies compliant with GDPR regulations.

Who are the key players in the Europe DeepFake AI Market?

▾ Microsoft, Intel, Sensity AI, Reality Defender, Truepic, Deepware, BioID, ID R&D, Hive Moderation, Blackbird.AI, and specialized European forensic software vendors and managed security service providers are prominent Europe DeepFake AI market participants.

How is the Europe DeepFake AI Market segmented?

▾ This market is segmented by offering (software and managed services), technology (GANs, autoencoders, RNNs, transformative models, NLP, blockchain), and end user (BFSI, telecom, government, healthcare, legal, media and entertainment, retail and e-commerce).