What is the DeepFake AI Market Size?

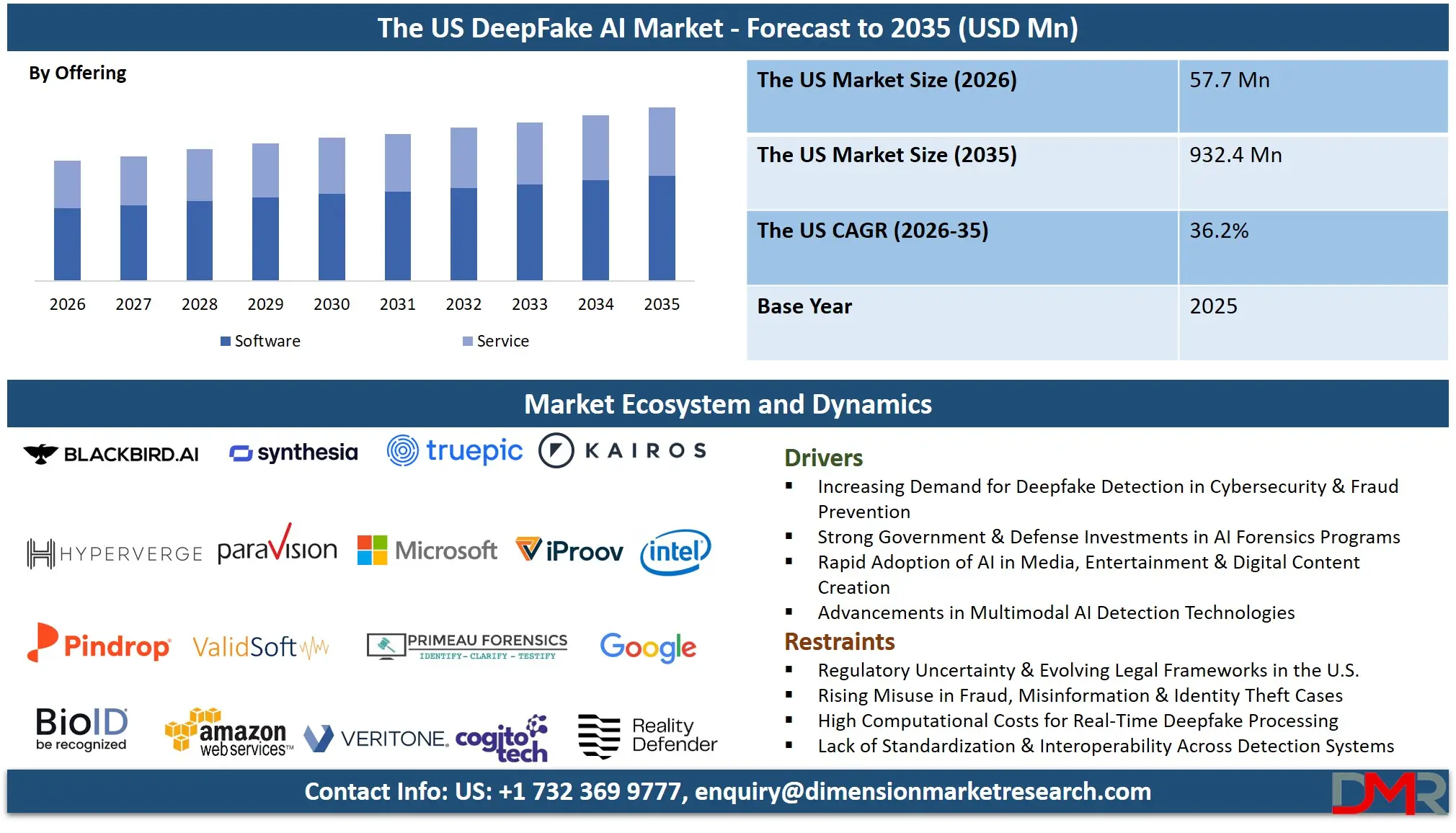

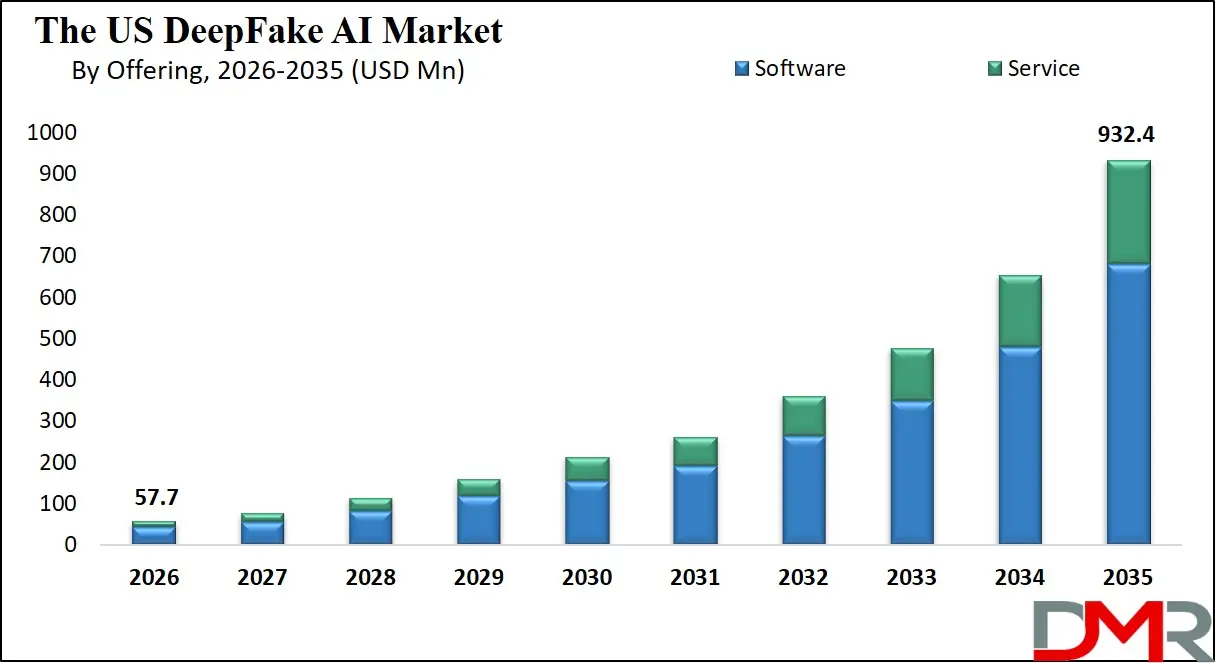

The US DeepFake AI market is expected to reach a value of USD 57.7 million in 2026, and it is further anticipated to reach USD 932.4 million by 2035, growing at a CAGR of 36.2% during the forecast period.

ℹ

To learn more about this report –

Download Your Free Sample Report Here

Deepfake AI technologies involve creating synthetic media using Generative Adversarial Network (GAN) and Autoencoders, or developing tools for detecting hyper-realistic fake content. The fast-growing trend of synthetic media creation and manipulation has become a problem for businesses and government institutions due to the high development rate of generative AI technology, increased risks of digital fraud, and the growing demand for reliable solutions that will allow detecting and combating misinformation and synthetic media.

Silicon Valley, New York City, Austin, Seattle and other hubs serve as international centers for developing new technologies of generating and identifying fake media content. The market involves researchers in AI and machine learning at top American universities, detection software vendors, digital forensics services, managed security service providers who develop and integrate solutions for defending against deepfakes. In addition, the rise of state-level initiatives for regulating the use of AI technologies prompts organizations to seek solutions for avoiding liabilities.

ℹ

To learn more about this report –

Download Your Free Sample Report Here

Key Takeaways

- Market Size & Forecast: The US DeepFake AI market is projected to reach USD 57.7 million by 2026 and expand dramatically to USD 932.4 million by 2035, fueled by the dual drivers of generative AI commercialization and heightened national security concerns regarding synthetic media.

- Growth Rate & Outlook: Market growth is expected to occur at an impressive CAGR of 36.2% due to growing risks from deepfake driven cyberfraud in the BFSI industry, election integrity issues highlighted by CISA, and the proliferation of biometrics-based corporate identity verification systems.

- Primary Growth Drivers: The driving forces that will lead to growth in this market include the increased spread of disinformation using artificial intelligence in social media networks, increased pressure from regulatory agencies such as the FTC and SEC, and the need for digital authentication of evidence in court cases in the US.

- Key Market Trends: Among the main market trends will be the application of real-time detection mechanisms to video conferencing applications, the implementation of blockchain technology for media provenance, the development of forensic software specifically designed for courtroom use, and managed deepfake detection and response services.

- By Offering Type Analysis: The Software category is estimated to hold dominance within the offering segment. The need for automated solutions that have the capability to deal with large volumes of video and audio content from news organizations, Hollywood film studios, social media monitoring platforms, and KYC procedures conducted by financial institutions will drive this adoption.

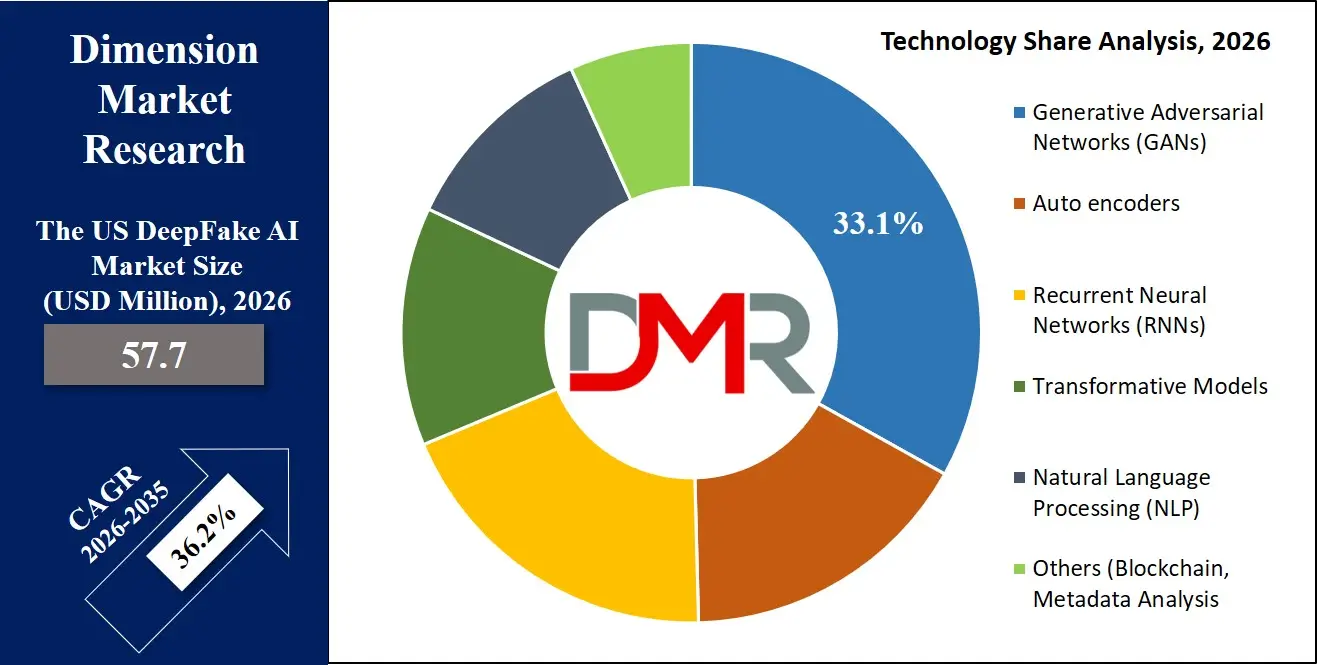

- By Technology Analysis: Generative Adversarial Networks is poised to dominate this segment as it has being used to create and detect deepfakes. The detection technology is evolving fast in order to detect fake content created using GANs. Moreover, there is an increasing use of NLP and forensic technologies in the detection of deepfake videos and images containing synthetic audio used in fraudulent activities.

- By End User Analysis: The BFSI end-user segments are expected to have a dominant share of the deepfake AI market. In BFSI, there is a requirement for authentication software and detection tools whereas Wall Street and regional banks require software that can prevent deepfake fraud.

What is the DeepFake AI?

The term DeepFake AI describes the practice of using state-of-the-art artificial intelligence and deep learning technologies, such as GANs and autoencoders, to create or manipulate videos, audio, and images in a highly realistic fashion. In the United States, DeepFake AI applies to both sides: on the one hand, it denotes the use of AI-driven generative technology for purposes of creating Hollywood movies, advertising materials, or satirical videos; on the other hand, it means using deepfake detection software, forensic investigations, and methods of establishing the provenance of a given piece of media to protect the country's and corporations' national security.

What sets DeepFake AI from other domains of generative AI application is the influence of the First Amendment on how this market developed in the United States and the tension between creativity and the necessity of securing the nation's safety. The use of AI-powered technology that can bring an actor from the afterlife to the movie screen, for instance, can be repurposed to create fake video conversations aimed at deceiving executives of major corporations or generating content that would destabilize political systems before upcoming elections.

Use Cases

- Financial Services Identity Protection: US financial institutions adopt deepfake detection technology to protect their remote KYC protocols from synthetic video and audio identity theft during the account opening process and wire transfer transactions.

- Federal Election Integrity Defense: The DHS and state election commissions apply deepfake detection technology to combat foreign governments' AI-based misinformation campaigns to influence US voters' decisions at the polls.

- Hollywood Creation and News Verification: US film studios leverage deep learning techniques for aging reversal and special effects, while news organizations such as CNN use advanced deepfake detection tools to verify user-uploaded footage prior to its airtime.

- Courtroom Digital Evidence Admissibility: US lawyers and forensic experts analyze digital audio and video evidence to prove that such evidence complies with the Daubert standard, making it suitable for presentation in courtrooms.

- Call Center Voice Fraud Prevention: US telecom companies employ deepfake detection technology in real-time speech analytics solutions to stop social engineering fraudsters from conducting vishing scams.

Market Dynamics

Key Drivers of the US DeepFake AI Market

Escalating Financial and Corporate Fraud

Financial organizations in the United States are experiencing a historic wave of identity theft and social engineering using deepfakes. Fraudsters exploit the power of AI-based image generation techniques to evade biometric identification systems and fake executives in their attempts at fraudulently wiring money. These emerging threats have made previous methods of fraud detection inadequate, prompting financial organizations on both Wall Street and Silicon Valley to spend millions on liveness detection software.

National Security and Election Integrity

AI-generated disinformation has been recognized as a major threat by many federal departments like the FBI, CISA, and DHS. The existence of highly advanced deepfake videos that target US elections calls for a serious need to develop methods that can detect such attacks on American democratic processes. The security requirement will lead to the creation of new markets where there will be an increase in federal procurement of forensic software for detecting any form of deepfakes by any foreign nation-state.

Market Challenges / Restraints in the US DeepFake AI Market

First Amendment Legal Complexity

The regulatory system adopted by the EU faces no such legal barriers; instead, the US market benefits from extensive protections offered by the First Amendment, especially in the context of political satire and artwork. Consequently, mandatory labeling schemes or prohibitions on the use of deepfakes would be constitutionally constrained, giving rise to a state-by-state patchwork approach to the regulation of deepfakes. The need to avoid violations of the First Amendment requires detection vendors to incorporate complex detection mechanisms that respect the constitutional rights of individuals while ensuring their security.

Adversarial AI Evolution and False Positives

Detection solutions will continue to operate in an adversarial relationship against deepfakes, with the rapid development of evermore advanced generative AI models coming out of US labs. As AI models evolve, the digital footprint exploited by detection software becomes weaker, resulting in high rates of false positives. Such a technological race imposes unreasonable research and development costs on detection vendors, raising questions about legal liability in case of mislabeling of real content as fake.

Growth Opportunities in the US DeepFake AI Market

Federal and Defense Sector Procurement

There is currently a huge untapped opportunity for deepfake detection and media forensics companies within the US government market space. Government agencies such as DoD and the Intelligence Community are focusing heavily on developing their capabilities in countering disinformation and conducting information warfare. This creates a very attractive opportunity for American vendors of forensic and attribution tools to sell their products in compliance with federal security guidelines to meet national security and defense goals from adversarial malign activities.

Integration with Enterprise Identity Platforms

American enterprises are rapidly adopting zero-trust security architectures and this creates great opportunities for deepfake detection firms to incorporate their technology in biometric authentication processes in IAM tools such as Okta, Microsoft Entra, and Ping Identity. This creates an opening for vendors to cater to the special needs of organizations' security departments to incorporate deepfake detection capabilities in identity and access verification procedures.

Trends in the US DeepFake AI Market

State-Level Legislative Patchwork

In the absence of a national framework, the states have begun legislating their deepfake regulations against non-consensual intimate material and election misinformation at an alarming rate. California, Texas, and New York are spearheading this new wave of regulation. These disparate state mandates are compelling companies to deploy detection technologies and content verification capabilities that can adapt to the differing regulatory landscapes of multiple jurisdictions, forming a unique compliance market with a demand for authentication technology designed for state-level laws.

Platform-Driven Content Provenance Initiatives

Among the largest technology players in America, such as Meta, Google, and Adobe, are leading the CAI and C2PA standards. The change of market focus from mere reaction to proactive authentication before capture represents an important trend that has emerged from such projects. Through incorporation of tamper-evident metadata at the stage of creation of media content using, for example, iPhones and other cameras, the new approaches allow downstream verification of the origin of media by platform services.

Research Scope and Analysis

By Offering

Software offerings are forecasted to lead in the offering segment within the US DeepFake AI market. These include the use of DeepFake Detection Algorithms by social media companies for automated analysis of their content, the use of Media Authentication Tools by news media outlets and movie production companies for authenticating sources, and forensic analysis tools used by the federal authorities and lawyers examining authenticity of digital evidence. The predominance of software can be attributed to the need for automation in high volumes that go beyond the ability of human moderators. In addition, Professional and Managed Services are predicted to grow at a rapid pace due to the need for external assistance from specialists within organizations to cope with changing legislation on deepfakes at the state level.

By Technology

Generative Adversarial Networks constitute the foundational technologies upon which the industry operates, but their usage is decidedly binary, as far as creation and defense go. In terms of defense, the industry needs critical detection capabilities for identifying visual anomalies and inconsistencies that cannot be detected by humans within GAN-generated images.

ℹ

To learn more about this report –

Download Your Free Sample Report Here

Additionally, Natural Language Processing has become an absolute must-have technology when it comes to detecting audio manipulations within the context of fraud cases involving corporations and AI-generated disinformation campaigns in the written language format aimed at Americans. Another area that continues to evolve and expand includes Blockchain and Metadata Analysis technologies that adopt the "verify, not detect" approach by providing unforgeable records of a media file's source code. This is particularly appealing to legal and journalistic entities in the United States.

By End User

End-users associated with the BFSI industry constitute the largest segment within the US market for deepfake AI technology, mainly due to increased needs for effective Customer Verification and Authentication processes in remote digital onboarding and transactions. Banks need to secure their systems against fraudulent practices and money laundering activities using advanced AI techniques that generate voice and video clones. US financial firms and banks have been putting substantial investments in liveness detection and biometric spoof detection technologies to protect their assets and comply with BSA/AML regulations. Apart from the BFSI industry, there is considerable demand for deepfake technology from media houses looking for provenance verification, the government to fight election misinformation, and legal practitioners in need of digital evidence authentication technologies that can be used in federal and state court cases.

The US DeepFake AI Market Report is segmented based on the following:

By Offering

- Software

- DeepFake Detection Algorithms

- Media Authentication Tools

- Forensic Analysis Software

- Service

- Professional Services

- Managed Services

By Technology

- Generative Adversarial Networks (GANs)

- Autoencoders

- Recurrent Neural Networks (RNNs)

- Transformative Models

- Natural Language Processing (NLP)

- Others (Blockchain, Metadata Analysis)

By End User

- BFSI

- Customer Verification & Authentication

- Anti-Money Laundering & Fraud Detection

- Telecom

- Call Center Security

- Fraud Detection

- Government

- Election Campaigns

- National Security

- Government Communications

- Content Verification & Moderation

- Ethical Hacking & Digital Security

- Enforcement Agencies

- Healthcare

- Medical Training & Simulation

- Patient Case Simulations

- Telemedicine & Virtual Healthcare

- Legal

- Digital Evidence Authentication

- Intellectual Property Protection

- Legal & Ethical Consultation

- Media & Entertainment

- CGI Character Creation

- De-aging Actors

- Special Effects & Visual Enhancements

- Digital Content Creation

- Celebrity & Influencer Marketing

- News Agencies

- Social Media

- Retail & E-commerce

- Customer Service & Personalization

- Visual Merchandising

- Security & Fraud Prevention

- Others

Competitive Landscape

The United States' DeepFake AI market has a competitive environment that comprises global technology firms along with an active environment of Silicon Valley-based ventures. The leading cloud and cybersecurity companies are embedding their detection software within their pre-existing enterprise cybersecurity packages using their well-established customer base and distribution channels.

ℹ

To learn more about this report –

Download Your Free Sample Report Here

But what makes the US deepfake detection market exceptionally ripe for specialized vendors with unique innovations in detection algorithm software and forensic investigation tools. These companies differentiate themselves from the competition based on cutting-edge research in artificial intelligence, live detection, and dedicated services. There is a distinct bias towards domestic vendors in purchases made by US governmental organizations as well as multinational firms due to national security considerations.

Some of the prominent players in the DeepFake AI Market are:

- Microsoft

- Amazon Web Services (AWS)

- Google

- Intel Corporation

- Veritone

- Cogito Tech

- Primeau Forensics

- iProov

- Kairos

- ValidSoft

- HyperVerge

- Pindrop

- Truepic

- Synthesia

- Sensity AI

- Reality Defender

- Paravision

- BioID

- Blackbird.AI

- Incode

- Other Key Players

Recent Developments

- March 2026: Cybersecurity company iProov warned about an increase in identity attacks fueled by the use of deepfakes, and also scaled up its biometric authentication platform, which can handle over a million transactions per day.

- December 2025: Cyble pointed out that deepfake-as-a-service is on the rise, making way for massive scale AI-powered fraud, impersonation, and cybercrime, raising enterprise risk and increasing demands for deepfake detection and cybersecurity countermeasures.

- August 2025: X (formerly Twitter) saw its AI tools, including the Grok ecosystem, associated with increased deepfake content circulation, emphasizing challenges in content moderation, misinformation control, and the urgent need for platform-level AI governance frameworks.

- May 2024: McAfee partnered with Intel to develop AI-powered deepfake detection leveraging NPU-enabled processors, aiming to enhance real-time media authentication, strengthen cybersecurity infrastructure, and address rising threats from synthetic media manipulation technologies.

Report Details

| Report Characteristics |

| Market Size (2026) |

USD 57.7 Mn |

| Forecast Value (2035) |

USD 932.4 Mn |

| CAGR (2026–2035) |

36.2% |

| Historical Period |

2021 – 2025 |

| Forecast Period |

2027 – 2035 |

| Base Year |

2025 |

| Estimate Year |

2026 |

| Segments Covered |

By Offering (Software and Service), By Technology (Generative Adversarial Networks (GANs), Auto encoders, Recurrent Neural Networks (RNNs), Transformative Models, Natural Language Processing (NLP), and Others (Blockchain, Metadata Analysis)), By End User (BFSI, Telecom, Government, Healthcare, Legal, Media & Entertainment, Retail & E-commerce, and Others) |

| Country Coverage |

The US |

Frequently Asked Questions

How big is the US DeepFake AI Market?

▾ The US DeepFake AI market is valued at USD 57.7 million in 2026 and is projected to reach USD 932.4 million by 2035, reflecting sustained and exponential expansion driven by escalating synthetic media threats and corporate fraud concerns.

What is the CAGR of the US DeepFake AI Market from 2026 to 2035?

▾ The US DeepFake AI market is expected to grow at a CAGR of 36.2 percent from 2026 to 2035, indicating robust long-term growth fueled by accelerating adoption of detection software and authentication tools across American enterprises and federal agencies.

What factors are driving the growth of the US DeepFake AI Market?

▾ Escalating deepfake-enabled financial fraud targeting US banks, national security and election integrity imperatives identified by CISA and DHS, proliferation of AI-generated disinformation on major social platforms, rising demand for digital evidence authentication in federal and state courts.

What are the major trends in the US DeepFake AI Market?

▾ Adoption of content authenticity initiative standards, integration of real-time audio deepfake detection in corporate call center security, state-level legislative activity addressing non-consensual deepfakes, and emergence of specialized managed detection services for enterprise security teams.

Who are the key players in the US DeepFake AI Market?

▾ Microsoft, Google, Intel, Veritone, Pindrop, Truepic, Sensity AI, Reality Defender, iProov, BioID, Deepware, and specialized American forensic software vendors and managed security service providers are prominent US DeepFake AI market participants.

How is the US DeepFake AI Market segmented?

▾ This market is segmented by offering (software and services), technology (GANs, autoencoders, RNNs, transformative models, NLP, and blockchain), and end user (BFSI, telecom, government, healthcare, legal, media and entertainment, and retail and e-commerce).